Analyzing fuzzy data doesn’t have to be slow: we taught FCA algorithms to ‘speak fuzzy’ natively

Analyzing data with graded values (fuzzy) has always been a bottleneck. We present a new family of native algorithms that are dramatically faster than traditional ‘scaling’ methods.

Real-world data is rarely a simple “yes” or “no”. It’s often graded, uncertain, or “fuzzy” (e.g., “hot” to a degree of 0.8, “important” at 0.6). Formal Concept Analysis (FCA) is a fantastic tool for analyzing this data, but it has a historical problem: it’s slow.

In our latest paper, accepted to the prestigious Q1 journal Fuzzy Sets and Systems, we tackle this bottleneck and present a solution that changes the game.

🧐 The problem: the universal “translator”

Until now, the standard way to analyze a fuzzy dataset was… to stop treating it as fuzzy.

The method is called “scaling” or “wrapping”. It involves taking a fuzzy problem (small and with graded values) and “translating” it into a binary problem (enormous and with only 0s and 1s). Then, a classic binary algorithm was used, and finally, the result was “translated” back into the fuzzy world.

This process is mathematically correct, but incredibly inefficient. It’s like trying to understand a book in English by translating it to German, analyzing it in German, and then translating the conclusions back to English. You waste a huge amount of time and computational resources.

💡 Our solution: teaching algorithms to “speak fuzzy”

We asked ourselves a simple question: if the Close-by-One (CbO) family of algorithms, like InClose5, are the fastest in the world for binary data, why don’t we teach them to understand fuzzy data directly?

Instead of using the “translator” (scaling), we modified the internal engine of these algorithms to operate natively with fuzzy values (degrees of membership, residuated lattices, etc.).

The result is a new family of algorithms, like FuzzyInClose5, that are:

- Mathematically equivalent: We formally prove that our native algorithms find exactly the same set of concepts as the scaling method. No rigor is lost.

- Much, much faster: By not needing the “translation” step, they avoid the complexity explosion and get straight to the point.

🛠️ The extra trick: the “smart skip”

On top of the native adaptation, we introduced an optimization that is only possible in the fuzzy world, which we call InClose5*.

Imagine the algorithm is testing the truth value 0.5 for an attribute. As it does the math, it realizes that the “true” conceptual value for that attribute is actually 0.75.

- A normal algorithm: Would continue testing

0.6, then0.7, and so on… wasting time. - Our

InClose5*algorithm: Is smart enough to say, “Ah, the real value is 0.75. I will skip directly to 0.75 and save all the intermediate steps.”

This “skipping” or “blacklisting” strategy for redundant values provides a spectacular additional speedup, especially in lattices with many degrees of truth.

🚀 The results: it’s fast. really fast.

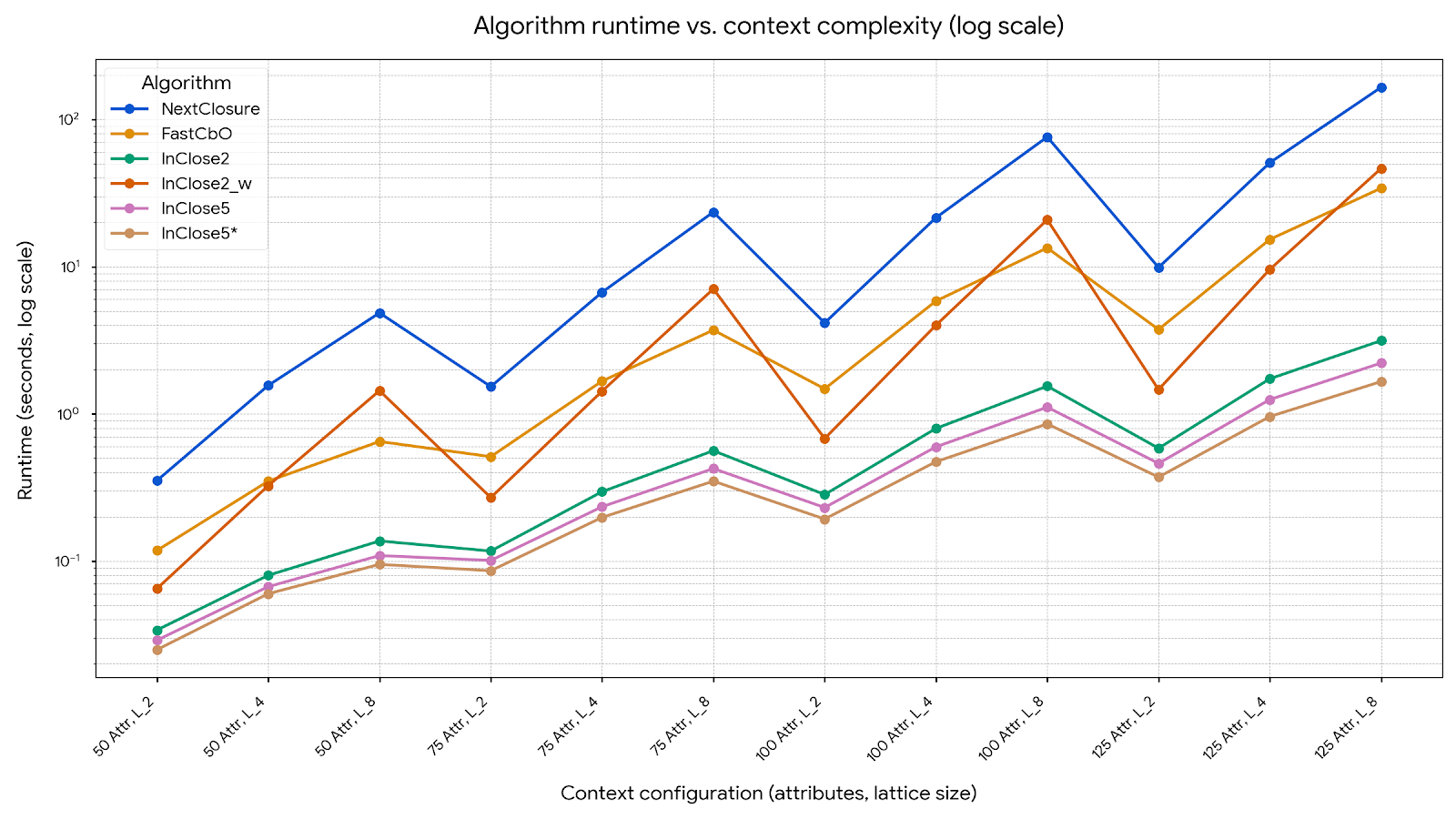

We benchmarked our new algorithms (FuzzyFastCbO, FuzzyInClose5, FuzzyInClose5*) against the existing methods: the fuzzy NextClosure and the scaling method (InClose2_w).

The results on both real and synthetic data are conclusive: the native approach wins by a landslide.

NextClosure and InClose2_w) shoot up. Our new versions (green, purple, orange) stay flat. Less time is better.

In one of the most complex scenarios we tested (100 objects, 150 attributes, 8 truth degrees), the numbers speak for themselves:

NextClosure(old): 889.5 seconds.InClose5*(ours): 8.1 seconds.

We turned nearly 15 minutes of computation into just 8 seconds.

🔬 Why does this matter?

This work isn’t just an academic optimization. It’s a performance leap that makes fuzzy FCA practical for real-world problems. We can now analyze larger and more complex datasets (with more attributes or more degrees of “uncertainty”) in reasonable amounts of time.

This opens the door to using these tools in more demanding applications, from more accurate recommender systems to analyzing social networks with graded relationships.

The next step is to explore how attribute ordering affects this performance to shave even more seconds off the clock.

📖 The full paper

If you want to know all the technical details, the correctness proofs, and the full experimental design, you can read the original article, which has been accepted in Fuzzy Sets and Systems.

Close-by-One-like algorithms in the fuzzy setting: theory and experimentation. Authors: Domingo López-Rodríguez, Manuel Ojeda-Hernández, Ángel Mora, Carlos Bejines. Journal: Fuzzy Sets and Systems (vol. 520, 109574)